Can you train a high-quality generative model using only a small 3D dataset?

That’s the core challenge Oleksandr Fedoruk, Michał Kruk, and Konrad Klimaszewski tackled in our latest research, now published in the Sensors journal. You can read the full open-access paper here: https://www.mdpi.com/1424-8220/25/24/7404

To address data scarcity, we adapted the StyleGAN2-ADA architecture specifically for 3D voxelized images. Our project introduces a PyTorch implementation with full support for 3D voxel-based augmentations within the Adaptive Discriminator Augmentation (ADA) mechanism.

Using a limited sample of CT lung images, we verified:

🔶 Data Efficiency: The model successfully trains with fewer than 300 images per class.

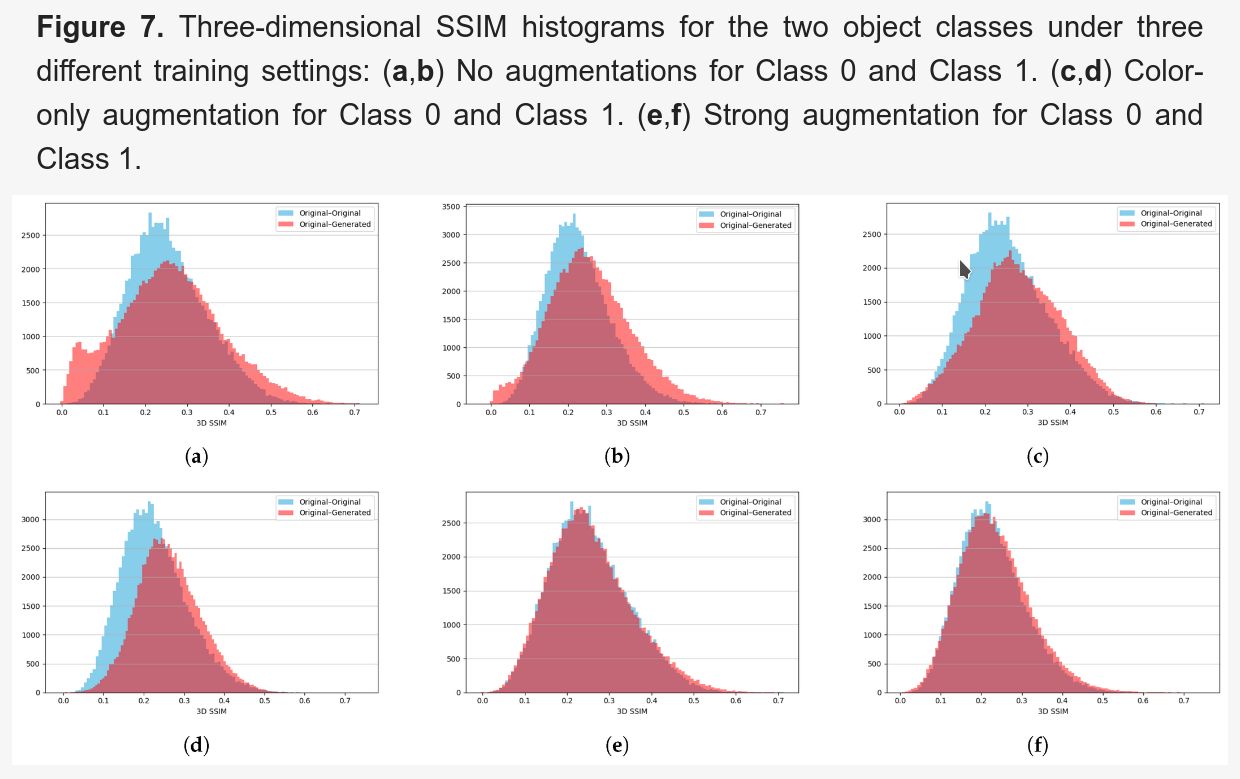

🔶 Structural Fidelity: Our implementation accurately reproduces the 3D structures and features of the original samples.

🔶 The ADA Advantage: Without the full ADA mechanism, we observed that StyleGAN2 trained on small 3D datasets suffers from discriminator overfitting and is prone to mode collapse.

🔶 Class Distinction: The incorporated style mechanism correctly generates distinct features for different image classes.

Such models open up several exciting possibilities:

🔷 Synthetic Datasets: Rapidly generating artificial data for the validation of downstream ML models.

🔷 Medical Data Augmentation: Enhancing small medical datasets for predictive modeling, particularly through inpainting techniques.

🔷 Privacy & Anonymization: Facilitating the anonymization of medical images through federated learning of generative models.

Open Source: The full implementation and voxelized augmentation tools are available on GitHub https://github.com/fedorukol/3d-stylegan2-ada.

The research was carried out in cooperation of Narodowe Centrum Badań Jądrowych and Szkoła Główna Gospodarstwa Wiejskiego w Warszawie.